Abstract

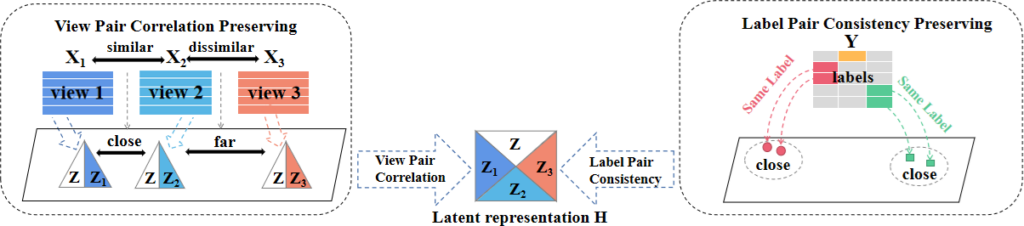

In multi-view learning, leveraging features from various views in an optimal manner to improve the performance on predictive tasks is a challenging objective. For this purpose, a broad range of approaches have been proposed. However, existing works focus either on capturing (1) the common and complementary information across views, or (2) the underlying between-view relationships by exploiting view pair similarities. Besides, for the latter, we find that the obtained similarities cannot representatively reflect the differences among views. Towards addressing these issues, we propose a novel approach called MELTS (Multi-viEw LatenT space learning with Similarity preservation) for multi-view classification. MELTS first utilizes distance correlation to explore hidden between-view relationships. Furthermore, by assuming that different views share certain common information and each view carries its unique information, the method leverages both (1) the similarity information of different view pairs and (2) the label information of distinct sample pairs, to learn a latent representation among multiple views. The experimental results on both synthetic and real-world datasets demonstrate that MELTS considerably improves classification accuracy compared to other alternative methods.